Introduction to EU AI Act News

Artificial Intelligence regulation has transitioned from theoretical debate to enforceable law. As of March 2026, the Regulation (EU) 2024/1689, commonly known as the EU AI Act, is actively reshaping the global technological landscape. With the most significant deadline of August 2, 2026, approaching for high-risk systems, organizations must move beyond “awareness” into “operational execution.”

This definitive guide analyzes the pivotal EU AI Act News, November 19, 2025, Digital Omnibus updates, future enforcement timelines, and the latest directives from the European AI Office.

The High Stakes of the August 2026 Deadline

The global tech community is currently fixated on August 2, 2026. This date marks the “full application” of the EU AI Act for most high-risk AI systems. Failure to comply is not merely a legal risk but a financial one, with penalties reaching up to €35 million or 7% of total global annual turnover. Unlike previous voluntary ethical frameworks, the AI Act is a mandatory product safety regulation with extraterritorial reach. If your AI output is used in the EU, you are subject to its jurisdiction, regardless of where your servers are located.

The Digital Omnibus Breakthrough (November 2025 News)

On November 19, 2025, the European Commission introduced the Digital Simplification Package, colloquially known as the Digital Omnibus on AI. This was a critical turning point for the regulation.

Key Amendments of the Digital Omnibus

The November 2025 proposal was designed to enhance predictability and workability. Lucilla Sioli, Director of the EU AI Office, emphasized that the goal was to “simplify the implementation without diluting the safety standards.”

- Readiness-Based Application (Article 113): The Commission proposed that obligations for certain high-risk systems should only apply once adequate supporting measures, such as harmonized standards and common specifications, are fully available.

- Support for Small Mid-Caps: The “SME” privileges, including reduced penalty ceilings and simplified documentation, were extended to “small mid-caps” to prevent regulatory stifling of growing tech firms.

- Bias Detection Legal Basis: A new Article 4a was introduced, allowing providers to process special categories of personal data (under strict safeguards) specifically for bias detection and correction, solving a major friction point with the GDPR.

Navigating the Technical Architecture and EUR-Lex Resources

To achieve compliance, developers and legal teams must utilize the official technical resources provided by the Union. The EU AI Act latest version PDF is hosted on the EUR-Lex portal, the official database for EU law.

The AI Act Explorer and Official Documentation

The AI Act Explorer has become the primary tool for navigating the 113 articles and 13 annexes. It allows users to filter obligations based on their role: Provider, Deployer, Importer, or Distributor.

For technical leads, the General-Purpose AI (GPAI) Code of Practice, finalized in late 2025, provides the specific metrics for “Systemic Risk.” Models trained with total computing power exceeding 10^25 FLOPs are now subject to rigorous adversarial testing and serious incident reporting.

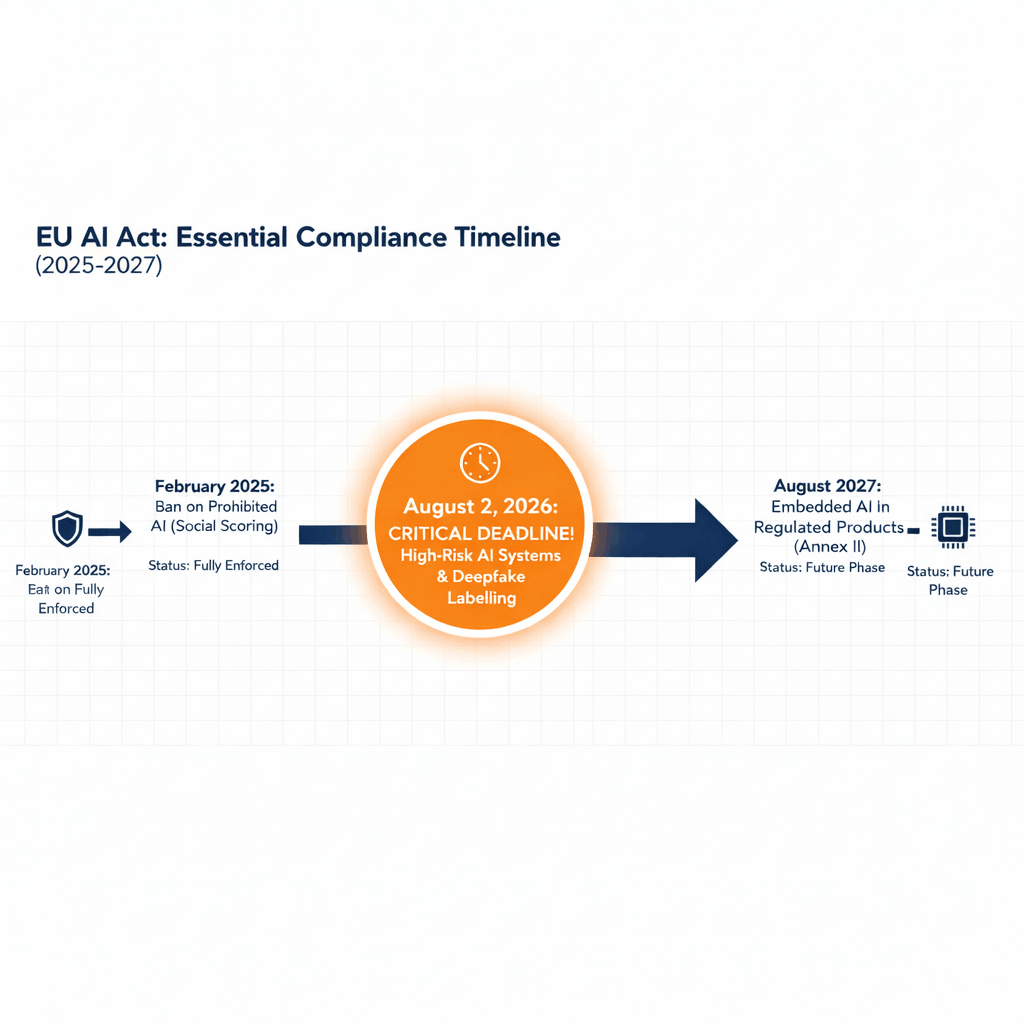

The Essential Compliance Timeline (2025 to 2027)

To stay ahead of the competition, businesses must align their product roadmaps with this factual timeline.

| Milestone Date | Requirement / Regulation Phase | Enforcement Status |

| February 2, 2025 | Ban on Prohibited AI (Social Scoring, Biometric Categorization) | Fully Enforced |

| August 2, 2025 | GPAI Transparency & Governance Rules | Active |

| November 19, 2025 | Digital Omnibus Simplification Proposal | In Transition |

| February 2026 | Release of High-Risk Classification Guidelines (Article 6) | Recent Update |

| August 2, 2026 | Major Deadline: High-Risk AI & Transparency Obligations | Critical Target |

| August 2, 2027 | Obligations for Annex I (Embedded AI in Regulated Products) | Future |

The Feb 2026 Article 6 Guidelines: What’s New?

The February 2026 release from the European AI Office has finally cleared the “legal fog” regarding High-Risk classification. Key takeaways include:

- The ‘Significant Harm’ Threshold: AI systems are only “High-Risk” if they pose a significant risk of harm to health, safety, or fundamental rights.

- Purely Accessory Tasks: If your AI performs a “purely accessory” task (like spell-check or basic data organizing) within a high-risk process, it may now be exempt from full compliance, a huge relief for developers.

- Self-Assessment Requirement: Providers must now document their “Annex III” assessment before placing the product on the market to avoid immediate €35M fines.

The 2027 Strategy: Understanding the Compliance Extension

The Digital Omnibus proposal (November 19, 2025) introduced a vital breathing room for the tech ecosystem to prevent an “innovation exodus” from Europe. While the August 2, 2026 hard deadline is still the target for all newly launched AI systems, there is a significant shift for legacy technology. The Omnibus suggests pushing the enforcement window to December 2027 for high-risk AI models that were already operational before the 2026 cutoff.

Strategic Takeaway: For SMEs and Small Mid-Caps, this is a critical financial advantage. If your existing AI deployments meet the eligibility criteria for this extension, you can defer heavy audit and third-party certification costs for an additional 16 months. Use this period to align your data governance with the latest 2026 standards without the pressure of immediate penalties.

Expert Analysis on EU AI Act News: Lucilla Sioli and the European AI Office

In a high-level lecture on February 26, 2026, Lucilla Sioli reaffirmed Europe’s commitment to “Digital Sovereignty.” She noted that while Europe depends on external suppliers for chips and foundational models, the AI Act serves as a “regulatory gold standard” that ensures these technologies respect European values.

The AI Office, now fully operational with over 140 staff members, is actively monitoring GPAI providers. Their current focus is on the AI Continent Action Plan, which aims to foster “AI Factories”, supercomputing centers dedicated to training compliant European AI models.

Hacker News and BBC Insights on EU AI Act News

The reception of the Act remains polarized. On Hacker News, developers frequently debate the “Standardization Bottleneck.” Many argue that the delay in harmonized standards (now expected late 2026) creates a “legal vacuum” for companies trying to build high-risk systems today.

Conversely, BBC News has highlighted the Act’s role in protecting public safety. Recent reporting has focused on the mandatory labeling of synthetic content (Deepfakes), which becomes a strict requirement under Article 50 starting August 2026. This is seen as a vital defense against AI-driven misinformation in democratic processes.

The 2026 Compliance Playbook: 10 Industries, 10 Solutions

1. Fintech & Banking (Credit Scoring)

- Explainability Audit: Ensure your AI can generate a human-readable reason for every credit denial.

- Data Governance: Map all data sources to ensure no “proxy variables” (e.g., using zip codes to infer race) are being used.

- Human Oversight: Appoint a “Credit Risk Officer” to sign off on AI-generated limits.

2. Healthcare (Diagnostics)

- Quality Management System (QMS): Implement ISO 13485-aligned processes for your AI software.

- Clinical Validation: Document the accuracy, robustness, and cybersecurity of the model in real-world clinical trials.

- User Instructions: Provide clear manuals for doctors on when to ignore the AI’s suggestion.

3. Human Resources (Recruitment)

- Bias Sandbox: Use the Article 4a (Digital Omnibus) legal basis to run bias-detection tests using sensitive data.

- Transparency: Notify all candidates that their CVs are being processed by an automated system.

- Log Retention: Keep logs of all automated ranking decisions for at least 6 months for potential audits.

4. Education (Proctoring & Grading)

- Privacy Impact Assessment (DPIA): Focus on the vulnerability of students (minors) as per GDPR and AI Act overlap.

- Appeal Mechanism: Create a manual process for students to challenge an AI-calculated grade.

- Accuracy Testing: Prove the AI doesn’t penalize students with non-standard accents or behavior.

5. E-commerce (Retail)

- Bot Disclosure: Ensure your AI chatbot clearly states “I am an AI” at the start of every interaction.

- Recommender Transparency: (For very large platforms) Explain the main parameters used to suggest products to a specific user.

- Deepfake Labeling: If using AI models for “virtual try-ons,” label the images as “Synthetically Generated.”

6. Law Enforcement (Biometrics)

- Strict Prohibitions: Immediately stop any “Emotion Recognition” in the workplace or schools.

- Authorization Log: For high-risk use cases, ensure you have a documented judicial or administrative authorization.

- Annex III Registration: Register the system in the official EU database for high-risk AI.

7. Logistics & Transport (Supply Chain)

- Sensor Reliability: Document how the AI handles “noisy data” from sensors in bad weather.

- Emergency Overrides: Ensure a human operator can manually stop autonomous delivery drones or trucks instantly.

- Incident Reporting: Set up a 24-hour channel to report “Serious Incidents” to the AI Office.

8. Critical Infrastructure (Energy/Water)

- Cyber-Resilience: Perform stress tests against “adversarial attacks” meant to manipulate grid distribution.

- Redundancy Systems: Ensure the infrastructure can run in “Safe Mode” if the AI fails.

- Third-Party Audit: Prepare for a mandatory conformity assessment by a “Notified Body.”

9. Digital Marketing (Advertising)

- Ad Disclosure: Clearly mark all AI-generated influencers or voices in social media campaigns.

- Copyright Compliance: Ensure your training data respects the “Opt-out” rights of creators under the EU Copyright Directive.

- Vulnerability Check: Ensure ads don’t use “Subliminal Techniques” to exploit specific groups (Article 5).

10. Software Development (SaaS/GPAI)

- Technical Documentation: Maintain a “Model Card” that lists training data, compute power, and energy consumption.

- Systemic Risk Check: If your model uses > 10^25 FLOPs, perform mandatory adversarial (Red Teaming) tests.

- Downstream Support: Provide your API users with the information they need to be compliant.

Expert Take: “The August 2026 deadline isn’t a suggestion; it’s a hard wall. For 90% of companies, the path to compliance starts with Article 6 (High-Risk Classification). If you misclassify today, you pay the fine tomorrow.”

Glossary of Key Terms: Making Sense of the Tech

To stay ahead in 2026, you must understand these five critical terms. They define whether your business is “Safe” or “High-Risk”:

- LLMs (Large Language Models): These are the engines behind tools like ChatGPT, Claude, and Gemini. In the AI Act, they are called GPAI (General-Purpose AI). If you are using an LLM to build an app, you need to know if the model provider has complied with EU transparency rules.

- GPAI (General-Purpose AI): This is the legal term for versatile AI (like LLMs) that can do many things, from writing code to summarizing legal docs. The AI Act has special rules for these because they can be used in almost any industry.

- FLOPs 10^25 Threshold): Think of FLOPs as the “Horsepower” of an AI. If an LLM is trained with more than 10^25 FLOPs, it’s considered a “Systemic Risk” (meaning it’s so powerful it could affect all of Europe). These models face the strictest testing.

- Annex III (The High-Risk List): This is the “Red Zone.” If your AI (even if it’s powered by an LLM) is used to hire people, grade students, or verify identities, it falls under Annex III and must meet heavy safety standards.

- Digital Omnibus (2025): A legal update that made life easier for startups. It allows companies to use sensitive data (normally protected by GDPR) specifically to test their LLMs for bias, ensuring the AI doesn’t discriminate against anyone.

Securing Your AI Future

The EU AI Act is not a static document but an evolving ecosystem. The November 2025 Digital Omnibus proved that the Commission is willing to listen to industry feedback to ensure the rules are “technically feasible.” However, the core deadlines remain unchanged.

Recommended Next Steps:

- Verify your Risk Tier: Use the AI Act Explorer to determine if your system falls under “High-Risk” Annex III.

- Review the November 2025 Amendments: Ensure your compliance strategy accounts for the “Readiness-Based” application of rules.

- Download the Official Text: Access the Regulation (EU) 2024/1689 via EUR-Lex to verify every clause with your legal counsel.

The era of unregulated AI in Europe is over. Those who master the compliance framework today will lead the market in 2027.

Frequently Asked Questions About EU AI Act News

What is the main deadline for the EU AI Act in 2026?

The most critical deadline is August 2, 2026. This is when the “full application” of the law begins for High-Risk AI systems (Annex III) and general transparency obligations (Article 50), including the mandatory labeling of deepfakes and AI-generated content.

Does the EU AI Act apply to companies outside of Europe?

Yes. The Act has extraterritorial reach. If your AI system’s output is used within the European Union, your organization must comply with the regulation, regardless of whether your headquarters are located in the US, Asia, or elsewhere.

What are the penalties for non-compliance in 2026?

Fines are among the highest in the world. Violations can reach up to €35 million or 7% of total global annual turnover, whichever is higher. Small and medium-sized enterprises (SMEs) may receive capped fines under the Digital Omnibus updates.

How do I know if my LLM (like GPT-4) is a “Systemic Risk”?

Under the 2026 guidelines, a General-Purpose AI (GPAI) model is a systemic risk if the cumulative computing power used for its training exceeds 10^25 FLOPs. These models require rigorous adversarial testing and incident reporting to the EU AI Office.

What is the “Digital Omnibus” update from November 2025?

The Digital Omnibus is a simplification package designed to make the AI Act more workable. It introduced Article 4a, allowing companies to use sensitive data for bias detection, and extended the compliance timeline for certain legacy “High-Risk” systems to late 2027.

Are simple AI tools like spell-checkers regulated?

Generally, no. The February 2026 Article 6 Guidelines clarified that AI performing “purely accessory” tasks, such as basic data organizing or spell-checking, is exempt from High-Risk obligations, even if used within a High-Risk process.

Is my AI-driven recruitment tool considered “High-Risk”?

Yes. AI used for recruitment, CV screening, and employee evaluation is classified under Annex III as High-Risk. By August 2026, these tools must have a Conformity Assessment and a human-in-the-loop oversight mechanism.

Can I use sensitive data to test my AI for bias?

Yes. Thanks to the November 2025 Digital Omnibus (Article 4a), providers can process special categories of personal data specifically for bias monitoring and correction, provided they implement strict technical safeguards to protect privacy.

What is the role of the European AI Office in 2026?

The AI Office is the central enforcement body. With over 140 experts, it monitors General-Purpose AI (GPAI) providers, facilitates the Code of Practice, and manages the “AI Factories” supercomputing initiative for European startups.

Do I need to label AI-generated images and videos?

Yes. Under Article 50, any synthetic content (Deepfakes) or AI-generated text/media that could be mistaken for authentic content must be clearly labeled and watermarked starting from the August 2, 2026 deadline.