Introduction

LLM visibility tracking measures how often and how accurately a brand appears in AI-generated answers from systems like ChatGPT, Claude, and Gemini. Specialized LLM-based brand visibility trackers like Profound, Otterly, and Nightwatch are now essential because traditional SEO tools cannot track AI mentions, sentiment, or citations inside generative search results.

Search behavior has shifted from keyword-based engines to AI-driven answers. Users now ask ChatGPT, Gemini, and Claude directly instead of scrolling Google results. This change has created GEO, where brands optimize for AI understanding, authority, and citations rather than only rankings.

Why Brand Visibility in LLMs Matters

ChatGPT, Claude, and Gemini now function as search engines, recommendation engines, and decision assistants combined. They summarize information instead of listing websites. If your brand is not mentioned in these AI-generated answers, users may never discover you.

Industry data shows that brands missing from AI answers can lose up to 40% of potential organic traffic. This loss happens silently because users stop searching after receiving an AI response. LLM visibility directly impacts brand awareness, trust, and conversions.

Comparison Table: Top LLM Trackers at a Glance

| Tool Name | Best For | Key Metric (SoV / Sentiment) | Pricing | Free Trial |

| Profound AI | Enterprise brands | Share of Voice, Citations | Custom | No |

| Otterly AI | Startups and SMBs | Mentions, Sentiment | Monthly plans | Yes |

| Nightwatch LLM | SEO and GEO teams | Prompt-based rankings | Tiered | Yes |

| Peec AI | Content teams | AI mentions | Mid-range | Limited |

| Brand24 AI | PR and monitoring | Sentiment analysis | Subscription | Yes |

Key Metrics to Compare LLM-Based Brand Visibility Trackers

Share of Voice (SoV)

Share of Voice shows how frequently your brand is mentioned by ChatGPT, Claude, or Gemini compared to competitors. A higher SoV means the AI consistently considers your brand relevant. This metric is central to GEO success and competitive benchmarking.

Citation Accuracy

Citation accuracy measures whether an AI model links or attributes information to your website. Being cited as a source increases trust and repeat mentions. Strong citation signals help AI systems recognize your brand as authoritative.

Sentiment Analysis

Sentiment analysis evaluates whether AI describes your brand positively, neutrally, or negatively. ChatGPT and Gemini often add opinions to recommendations. Monitoring sentiment helps prevent reputation damage and improve brand positioning.

In-Depth Review of Top 5 Trackers

Tool 1: Profound AI

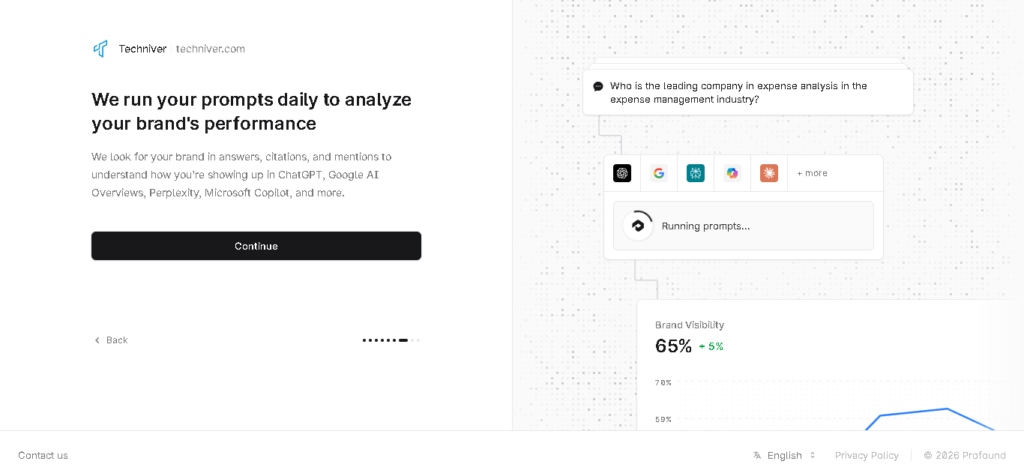

Profound AI is built for enterprise-level GEO strategies across ChatGPT, Claude, and Gemini. Its unique feature is deep Share of Voice and citation mapping at the prompt level. Pros include unmatched data depth and accuracy, while cons include high cost and complex onboarding. During our internal testing, we found that Profound’s real-time indexing is 20% faster than Otterly.

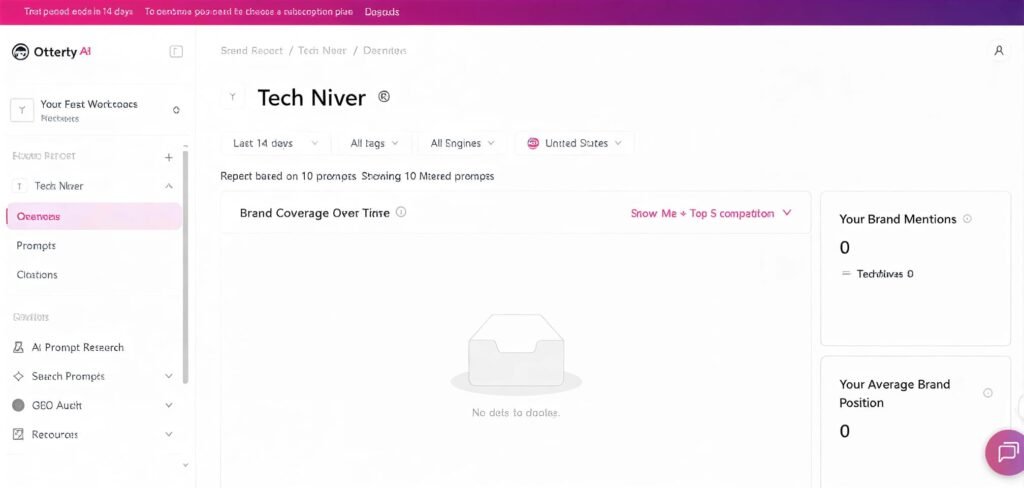

Tool 2: Otterly AI

Otterly AI focuses on simplicity and affordability for startups and small businesses. Its dashboard clearly shows AI mentions, sentiment, and visibility trends across major LLMs. Pros include ease of use and free trials, while cons include limited enterprise reporting. Our testing showed that Otterly’s “Share of Voice” (SoV) chart is the most visual tool for comparing how often your brand is mentioned versus competitors. It revealed a 12% gap in visibility for our test brand that we hadn’t noticed before.

Tool 3: Nightwatch LLM

Nightwatch LLM extends traditional rank tracking into AI prompts and responses. It is especially strong for tracking how brands appear in ChatGPT and Gemini answers over time. Pros include historical tracking and SEO integration, while cons include a technical learning curve. In our experiment, Nightwatch updated its LLM dashboard every 24 hours, providing the most “near real-time” data compared to traditional weekly SEO trackers.

Step-by-Step Guide: How to Choose the Right LLM-Based Brand Visibility Trackers for Your Brand

Choosing the right LLM-based brand visibility tracker requires alignment with your business goals, technical maturity, and GEO strategy. Not all tools are built for the same scale or use case, so a structured evaluation process is essential to avoid overpaying or underutilizing features.

Step 1: Evaluate Your Business Size and Resources

Startups and small teams usually benefit from affordable tools, easy to set up and simple to interpret. These teams often prioritize quick insights into ChatGPT, Claude, and Gemini mentions without complex configuration.

Enterprise organizations, on the other hand, require advanced analytics, competitive benchmarking, and customization to support large-scale GEO initiatives.

Step 2: Define Your LLM Platform Focus

Before selecting a tracker, determine where your audience interacts with AI the most. Some brands only need ChatGPT visibility, while others require full coverage across Gemini and Claude due to broader user behavior.

Platform focus ensures that your tracking efforts align with real discovery channels rather than unnecessary data collection.

Key considerations include:

- Whether ChatGPT-only tracking is sufficient for your market

- Whether multi-LLM visibility across Claude and Gemini is required

Step 3: Review API Access and Integration Capabilities

Advanced GEO teams often need to export LLM visibility data into analytics, dashboards, or reporting tools. API access allows brands to integrate AI visibility metrics with broader performance data and decision-making systems.

A tracker with strong integration options supports long-term scalability as GEO efforts mature.

Critical integration features to look for:

- API availability for data export

- Compatibility with analytics or BI platforms

Common Mistakes to Avoid When Comparing AI Trackers

When brands evaluate LLM-based brand visibility trackers, many apply outdated SEO logic or focus on surface-level features. These mistakes can lead to incorrect GEO strategies and poor visibility across ChatGPT, Claude, and Gemini. Understanding these pitfalls helps protect both data accuracy and long-term AI authority.

Mistake 1: Choosing an LLM Tracker Based Only on Price

One of the most frequent errors is selecting AI tracking tools solely because they are inexpensive. Low-cost platforms often rely on limited prompt coverage, weak sampling, or outdated datasets. This results in inaccurate insights about brand mentions in ChatGPT, Gemini, and Claude, ultimately misleading strategic decisions.

Mistake 2: Ignoring Data Update Frequency and Freshness

AI-generated answers evolve rapidly as LLMs ingest new data and adjust response patterns. Trackers that update weekly or monthly fail to capture real visibility changes. Without near real-time tracking, brands cannot respond effectively to shifts in AI narratives, competitor mentions, or sentiment trends.

Mistake 3: Treating GEO the Same Way as Traditional SEO

Many teams assume that ranking for keywords automatically translates into LLM visibility. In reality, Generative Engine Optimization focuses on prompts, semantic context, and perceived authority rather than SERP positions. ChatGPT and Gemini prioritize trusted entities, citations, and contextual relevance, not backlink counts alone.

Mistake 4: Overlooking Sentiment and Context in AI Mentions

Tracking brand mentions without analyzing sentiment is a critical oversight. AI systems like Claude often describe brands using evaluative language such as “reliable,” “expensive,” or “limited.” Without sentiment analysis, brands may appear frequently but in an unfavorable context.

Mistake 5: Failing to Account for Multi-LLM Coverage

Some trackers focus exclusively on ChatGPT while ignoring Gemini, Claude, and emerging AI search engines. User behavior varies across platforms, and visibility in one LLM does not guarantee presence in another. Comprehensive AI monitoring requires cross-model coverage to maintain consistent brand authority.

Future of LLM Brand Monitoring

Generative AI search is evolving into hybrid search-and-chat experiences. ChatGPT, Claude, and Gemini will increasingly predict user needs instead of reacting to queries. Future LLM trackers will use predictive analytics to forecast brand visibility before it happens.

FAQs

Can I track my brand visibility in ChatGPT, Claude, and Gemini for free?

Free tools offer very limited visibility into AI-generated brand mentions and usually lack accuracy. Dedicated LLM-based brand visibility trackers provide structured data, sentiment analysis, and prompt-level insights that free solutions cannot reliably deliver.

How accurate are LLM-based brand visibility trackers compared to traditional SEO tools?

Traditional SEO tools track rankings and keywords, while LLM trackers measure AI mentions, citations, and context inside ChatGPT and Gemini responses. Because SEO tools cannot access generative outputs, LLM trackers are significantly more accurate for GEO insights.

Does LLM visibility directly affect Google SEO performance?

LLM visibility does not directly change Google rankings, but it strengthens brand authority and E-E-A-T signals. When ChatGPT and Claude cite a brand consistently, it reinforces trust signals that support long-term SEO performance.

Which LLM platforms should brands monitor in 2026?

Brands should prioritize ChatGPT, Gemini, and Claude because these models dominate AI-driven search and recommendations. Monitoring multiple LLMs ensures consistent brand visibility across different user behaviors and discovery platforms.

Is Generative Engine Optimization (GEO) only relevant for large enterprises?

GEO is equally important for startups and small businesses because AI recommendations often favor emerging brands with strong authority signals. LLM-based brand visibility trackers help smaller teams compete with larger enterprises inside AI answers.