UK AI Regulation News Today

As of early May 2026, navigating the shifting landscape of digital policy requires keeping a close watch on the UK AI regulation news today. While the European Union enforces its omnibus AI Act, the United Kingdom has taken a distinct path. Led by Technology Secretary Liz Kendall MP and the Department for Science, Innovation and Technology (DSIT), the UK’s policy framework is moving toward a hybrid strategy. This balances a sector-specific, pro-innovation stance with targeted interventions for highly capable frontier models.

This in-depth brief brings you the most critical UK AI regulation news today, analysing recent enforcement powers, algorithmic safety, and intellectual property frameworks to help your organisation remain compliant.

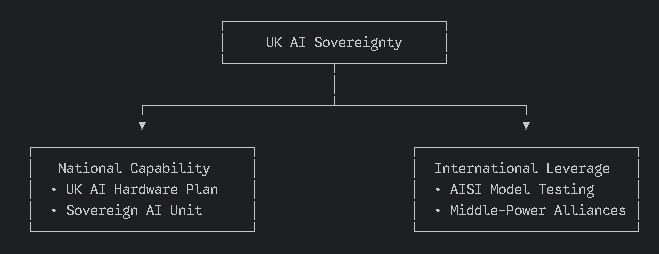

The Core Pillar: From Direct Legislation to ‘AI Sovereignty.’

In a landmark policy shift at the Royal United Services Institute (RUSI) on April 28, 2026, the government defined a more muscular approach. A prominent update in the UK AI regulation news today is that the Labour government has shelved plans to introduce a heavy, single-statute “AI Bill.” Instead, it is focusing its energy on building the UK’s underlying technological leverage, often referred to as “AI Sovereignty.”

The 2026 AI Hardware Plan

The UK government plans to launch a dedicated AI Hardware Plan at London Tech Week in June 2026. This policy aims to secure a 5% global market share in AI chips and semiconductors, reducing the UK’s reliance on external supply chains and ensuring national compute capability.

International Alliances and Standards

A central element of the UK AI regulation is the strategy to position the UK as a “keystone in the global tech architecture.” Rather than enforcing local binding rules on minor software platforms, the UK is collaborating with middle-power nations to establish unified evaluation standards.

In July 2026, at the next meeting of the International Network of AI Security Institutes, the UK’s AI Security Institute (AISI) will publish best-practice frameworks for testing and evaluating frontier models.

Sector-Specific Governance: The Five Principles

While cross-cutting legislation remains absent, existing regulators use their regulatory powers to govern AI based on five core principles first outlined in the AI White Paper:

- Safety, Security, and Robustness

- Appropriate Transparency and Explainability

- Fairness

- Accountability and Governance

- Contestability and Redress

| Regulatory Authority | Core Domain | Latest Guidance & Actions |

| Information Commissioner’s Office (ICO) | Data Privacy | Updated guidance on how the UK GDPR applies to biometric recognition, AI system training, and generative AI tools. |

| Financial Conduct Authority (FCA) & PRA | Banking & Financial Markets | Focus on AI model risk management, algorithmic trading, and data quality within consumer products. |

| Competition and Markets Authority (CMA) | Competition & Consumer Rights | Monitoring foundation model developers to prevent anti-competitive consolidation and unfair advantages. |

| Medicines & Healthcare products Regulatory Agency (MHRA) | Healthcare & Medical Devices | Reviewing evidence to establish safety standards for AI-assisted medical diagnostic tools. |

| Office of Communications (Ofcom) | Online Services & Platforms | Overseeing the impact of algorithms and LLMs on the distribution of harmful or illegal content. |

Keeping up with UK AI regulation news today means recognising that these regulators are independently interpreting their statutory powers. This can lead to differing enforcement approaches across industries.

The Rise of Municipal & Regional AI Governance in the UK

While national regulators dominate the headlines in the UK AI regulation news today, a major trend is the emergence of regional and municipal AI frameworks. Tech leaders must look beyond Whitehall to understand local compliance:

- Greater London Authority (GLA) AI Guidelines: The GLA has quietly established its own internal procurement and deployment frameworks for algorithmic systems used in urban planning, transport (TfL), and localised public services.

- Devolved Nations’ Divergence: Scotland and Wales are exploring specific ethical guidelines that prioritise public sector transparency. Scotland’s AI Strategy continues to emphasise a “trustworthy, ethical, and inclusive” deployment model that may enforce higher human-in-the-loop thresholds than England.

- Impact on Local Enterprise: If your enterprise provides AI-driven SaaS or smart-city technologies to regional councils, you must align not only with national DSIT principles but also with local ethical impact assessments.

Key Legislative Milestones Impacting AI in 2026

To stay informed on the UK AI regulation news today, businesses must understand the cross-sector statutes that actively govern AI development and deployment.

A. The Data (Use and Access) Act 2025

Commencing on February 5, 2026, the Data (Use and Access) Act 2025 introduces several AI-friendly reforms:

- Automated Decision-Making (ADM): It removes certain EU-style constraints on ADM under the UK GDPR, making it easier to deploy AI models for automated processing without manual intervention.

- Broad Scientific Consent: It expands the legal definition of “scientific research” to include commercial research and development. This allows developers to use datasets for AI training under a wider consent framework.

B. The Online Safety Act 2023 & The Crime and Policing Bill

The scope of algorithmic accountability has widened in 2026. The UK AI regulation news today highlights targeted regulatory expansions regarding digital platforms and synthetic media:

- Chatbot Liabilities: Tech Secretary Liz Kendall recently tasked DSIT officials to assess gaps in the Online Safety Act 2023. This is moving the government toward granting the Secretary of State powers to enforce safety duties on AI service providers.

- Synthetic Media & Nudification: On February 6, 2026, amendments to the Sexual Offences Act 2003 took effect, criminalising the creation or distribution of non-consensual synthetic intimate images. The Crime and Policing Bill further bans tools like AI deepfake generators and nudification software.

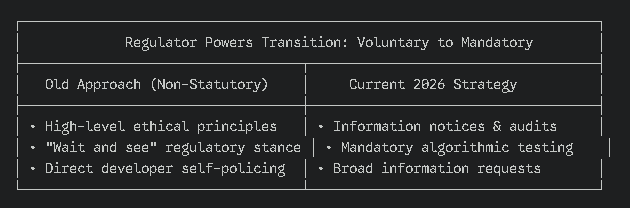

The Enforcement Paradigm: UK Regulators’ New Information-Gathering Powers

A critical missing link in the UK AI regulation news today is how sector-specific principles are transitioning from voluntary guidance to de facto mandatory rules through existing statutory powers:

- Section 31 Information Notices: Regulators like the Competition and Markets Authority (CMA) and the Information Commissioner’s Office (ICO) are increasingly using their existing investigative and information-gathering powers to demand deep algorithmic audits from foundation model developers.

- The Strategic Review Deadlines: By early 2026, all major regulators were required to submit their updated strategic AI enforcement plans to the government. This has turned once-vague ethical pillars into concrete enforcement targets, particularly regarding cross-market dominance and data harvesting.

- Anticipated Penalties: Non-compliance no longer results in a mere slap on the wrist. Using automated systems that process personal data or impact digital markets without clear audit trails exposes tech companies to immediate statutory fines under the UK GDPR and consumer protection laws.

IP, Copyright, and AI Training Developments

For creative industries and LLM developers, the most anticipated updates in the UK AI regulation news today involve intellectual property.

Following a comprehensive consultation that concluded with an economic impact assessment and a substantive report on March 18, 2026, the government confirmed its position on copyright reform:

- No New Opt-Out Data Mining Exception: The previous proposal for a broad text and data mining (TDM) exception with an opt-out mechanism was dropped.

- Focus on Input Transparency: To train AI models using copyrighted works in the UK, developers still need a license. The government is working with the industry to set voluntary standards for input transparency, allowing rights holders to assert their rights without aggressive statutory changes.

- Abolition of Wholly Computer-Generated Copyright: The government supports removing specific copyright protection for works generated entirely by AI, ensuring protection remains focused on human creativity.

Compute Infrastructure and ESG Compliance in AI Deployment

You cannot separate the UK AI regulation news today from the broader environmental, social, and governance (ESG) obligations placed on digital infrastructure in 2026:

- The DSIT Green Compute Initiative: As the UK scales up its Sovereign AI capabilities, the government has tied advanced compute grants and AI sandbox access to high energy efficiency standards.

- Mandatory Carbon Reporting: Under the UK’s evolving non-financial reporting frameworks, enterprise companies deploying compute-heavy LLMs are increasingly expected to audit and report the carbon footprint associated with their AI workloads and data centre utilisation.

- The ESG Competitive Advantage: Tech leaders who optimise their algorithmic architectures for energy efficiency, such as adopting smaller, specialised fine-tuned models rather than massive general-purpose frontier systems, will face lower regulatory hurdles and gain faster clearance from infrastructure regulators.

Summary: What AI Developers and Businesses Need to Do Now

Following the latest UK AI regulation news today, compliance is no longer a passive effort. Companies operating AI systems within the UK should focus on these key practices:

- Perform Regular Safety Audits: Align with the AI Security Institute’s upcoming July evaluation standards to ensure models are robust before deployment.

- Review Data Practices: Use the updated provisions in the Data (Use and Access) Act 2025 to streamline automated decision-making and data consent, while maintaining transparency.

- Follow Sector-Specific Rules: Check for updates from the ICO, FCA, and CMA on algorithmic bias, competition risks, and data protection.

By keeping a close eye on the latest UK AI regulation news today, organisations can balance rapid deployment with long-term compliance, turning regulatory changes into a competitive advantage.

Frequently Asked Questions

Does the UK have a dedicated AI Act like the EU?

No, the UK has officially shelved plans for an omnibus AI Act. Instead, the government follows a pro-innovation, sector-specific strategy led by the Department for Science, Innovation and Technology (DSIT). Regulation is distributed across existing statutory bodies like the ICO, CMA, and FCA, which enforce high-level safety, fairness, and algorithmic transparency principles within their respective jurisdictions.

How does the Data (Use and Access) Act 2025 affect AI developers?

Effective as of February 5, 2026, the Data (Use and Access) Act 2025 streamlines AI deployment by:

Automated Decision-Making (ADM): Removing certain EU-style restrictions under the UK GDPR, making it legal to use automated processing without mandatory human intervention.

Commercial R&D Exemptions: Broadening the legal scope of “scientific research” to allow tech companies to use complex datasets for training foundational AI models with fewer consent hurdles.

What is the purpose of the 2026 UK AI Hardware Plan?

Announced by Technology Secretary Liz Kendall MP, the AI Hardware Plan (launching in June 2026 at London Tech Week) aims to secure a 5% global market share in semiconductors and chips. This policy establishes sovereign compute infrastructure and limits the UK’s dependence on external tech supply chains, ensuring both national security and domestic technological advantage.

Are there new penalties for deploying AI without human oversight in the UK?

Yes. Under the evolving UK AI regulation news today, sector-specific regulators are using Section 31 Information Notices to mandate strict algorithmic audits. If automated decision-making systems process personal data without clear audit trails or breach the UK GDPR or Equality Act 2010, enterprises face immediate enforcement actions, including statutory fines of up to 4% of global turnover.

What are the UK’s rules for AI training on copyrighted data in 2026?

The government has rejected the introduction of a general text and data mining (TDM) opt-out exception for AI training. Instead, to train AI systems on copyrighted works in the UK, developers must obtain explicit commercial licenses. The current framework emphasises strict input transparency standards to help rights holders enforce their intellectual property rights.

How is regional AI governance handled across London and Scotland?

Beyond national frameworks, regional governance is tightening:

Greater London Authority (GLA): Enforces local ethical and technical procurement metrics for AI systems utilised in local infrastructure and transport.

Scotland’s AI Strategy: Mandates higher human-in-the-loop thresholds and explicit transparency requirements for public sector algorithmic deployments compared to national standards.

What are the AI regulations for AI chatbots and deepfakes under UK law?

Under the latest updates covered in the UK AI regulation news today, AI chatbots and synthetic media face stringent new rules. Following the February 6, 2026, amendments to the Sexual Offences Act 2003, creating or distributing synthetic non-consensual intimate images (deepfakes) is explicitly criminalised. Furthermore, the government has moved to bring generative AI chatbots under the direct enforcement scope of the Online Safety Act 2023, giving regulators more powers to sanction platforms that fail to filter illegal or harmful content.

Are AI companies legally required to test their models with the UK AI Security Institute?

Currently, testing advanced frontier models with the AI Security Institute (AISI) operates as a voluntary and cooperative commitment rather than a strict legal obligation. However, it functions as a de facto standard for high-risk systems. Leading developers share pre- and post-deployment evaluation metrics with the AISI. In July 2026, the International Network of AI Security Institutes will publish unified best-practice evaluation guidelines to solidify these expectations globally.

How are UK regulators using the five AI principles without passing new laws?

The UK government directs sectoral regulators, such as the ICO, FCA, and CMA, to apply five core non-statutory principles (Safety, Transparency, Fairness, Accountability, and Contestability) within their existing legal frameworks. Regulators enforce these by updating their sector-specific guidance. For instance, the ICO applies these principles using the UK GDPR, while the FCA integrates them into the existing Prudential Regulation Authority (PRA) rulebook for automated financial systems.

Does the UK regulate the use of AI in recruitment and the workplace?

Yes. Although there is no single AI employment law, deploying algorithmic tools for hiring or workplace management must comply with existing frameworks. The Equality Act 2010 protects against algorithmic discrimination or bias, while the Employment Rights Act 1996 safeguards against unfair dismissals caused by automated decision-making. In early 2025/2026, DSIT published the Responsible AI in Recruitment framework to guide organisations in auditing their automated processes.

What is the stance of the Home Office on the regulation of Police AI and facial recognition?

As frequently highlighted in the UK AI regulation news today, the Home Office’s launch of the Police.AI centre, backed by £115 million, has made algorithmic policing a major regulatory focus. While the government encourages using advanced AI tools across the 43 forces in England and Wales, these tools must operate under strict ethical boundaries. Sectoral oversight from data protection authorities ensures that live facial recognition (LFR) and predictive algorithms comply with the Data Protection Act 2018 to avoid unlawful profiling.