Introduction

How to Monitor LLM Visibility of Your Brand: Track specific search prompts across AI platforms like ChatGPT, Gemini, Claude, Perplexity, and Google AI Overviews. First, monitoring tools run the same queries repeatedly to record where your brand is mentioned, cited, or excluded. Then, these tools analyze the share of voice, sentiment, and which sources AI models trust for answers. Using the best tools for tracking LLM brand visibility, you can compare results with competitors and identify exactly which content needs optimization to appear more often in AI responses.

What Is LLM Brand Visibility and Why It Matters

LLM brand visibility refers to how often and in what context large language models mention or cite your brand when users ask questions related to your industry. Unlike traditional search results, where multiple websites can rank on the first page, AI platforms usually provide a single consolidated answer. This means only a few brands receive visibility, while others are completely excluded.

When your brand does not appear in AI-generated answers, you lose early-stage buyer influence, authority, and trust. This is why companies increasingly rely on LLM visibility analysis to understand how AI systems describe them and how they compare to competitors in AI-driven discovery.

How to Monitor LLM Visibility of Your Brand Works in Practice

Identifying Priority Queries and Prompts

LLM visibility monitoring starts by defining the exact questions your audience asks AI platforms. These are not random keywords but full prompts that reflect real user intent. Brands focus on category-level questions, buyer-intent queries, comparison prompts, and problem-based questions relevant to their product or service.

By locking in a fixed set of priority prompts, brands create a consistent baseline for monitoring visibility over time. Without this step, visibility data becomes fragmented and unreliable.

Testing Prompts Across AI Platforms

Once prompts are finalized, the same questions are tested across multiple AI platforms. Each AI engine responds differently based on its training data, ranking logic, and citation behavior. This step reveals whether a brand appears consistently across platforms or only in specific AI systems.

During this stage, teams analyze how prominently the brand is mentioned, whether competitors are favored, and whether the AI response positions the brand as a primary recommendation or a secondary option.

Capturing Mentions, Citations, and Context

After collecting AI responses, each answer is analyzed to record brand mentions and citations. A mention indicates that the brand name appears in the response, while a citation shows that the AI explicitly references or links to a specific webpage or source.

Context matters as much as presence. Monitoring includes checking whether the brand is framed as an industry leader, a budget option, a niche solution, or an alternative recommendation. This qualitative layer explains not just if your brand appears, but how it is perceived.

Comparing Visibility Against Competitors

LLM visibility monitoring always includes competitor analysis. The same prompts are evaluated to see which brands dominate AI responses and which are ignored. This comparison reveals the share of voice within AI-generated answers and highlights gaps where competitors outperform your brand.

By analyzing competitor positioning, brands can understand what type of content, authority signals, or messaging AI platforms reward most in their industry.

Analyzing Sentiment and Brand Framing

Not all AI mentions are positive. Sentiment analysis evaluates whether AI platforms describe your brand favorably, neutrally, or negatively. Contextual framing also matters, such as whether your brand is associated with innovation, reliability, affordability, or outdated solutions.

Negative or misleading sentiment signals a need for content clarification, stronger authority signals, or improved reputation management across the web.

Tracking Visibility Changes Over Time

LLM visibility is dynamic, not static. Monitoring involves repeating the same prompt tests regularly to track changes in mentions, citations, and competitor positioning. Historical analysis shows whether optimization efforts are improving visibility or whether competitors are gaining ground.

This time-based tracking transforms AI visibility from a one-time audit into an ongoing performance metric.

How to Set Up Your First LLM Tracking Campaign in 5 Minutes

Monitoring your brand’s AI visibility doesn’t have to be complicated. Before investing in high-end enterprise tools, you can establish a baseline using this proven 5-minute manual workflow.

Step 1: Curate Your 10 “Money Prompts”

Don’t track random keywords. Focus on “Money Prompts”—the specific questions that drive high-intent users to a purchase decision. Divide them into three categories:

- Category Leaders: “What are the top 5 [Product Category] software for 2026?”

- Direct Comparisons: “Compare [Your Brand] vs [Top Competitor] for enterprise security.”

- Problem-Solution: “How can I automate [Specific Pain Point] for my marketing team?”

Step 2: Execute Prompts Across Major AI Engines

Don’t rely on a single model. Run your 10 prompts across the “Big Three” to see how their training data differs:

- ChatGPT (OpenAI): Focuses on historical reputation and general consensus.

- Perplexity AI: Operates like a real-time search engine; look for direct citations and links.

- Google AI Overviews (Gemini): This is the future of Google Search; visibility here directly impacts your organic traffic.

Step 3: Log Data in a Visibility Tracking Matrix

Create a simple spreadsheet to record your findings. This allows you to track patterns over time rather than looking at one-off answers. Use these columns:

| Priority Prompt | AI Platform | Brand Mentioned? | Citation/Link? | Competitors Surfaced |

| “Best [Your Category] for startups” | ChatGPT | Yes | No | Competitor A, B |

| “[Your Brand] vs [Competitor] features” | Perplexity | Yes | Yes (Direct Link) | Competitor A |

| “Top rated [Product] in 2026” | Gemini | No | No | Competitor B, C |

| “How to solve [Problem] with software.” | Claude | Yes | No | Competitor D |

Step 4: Perform the “First Paragraph” Analysis

In the world of LLMs, the first paragraph is the only “Prime Real Estate.” AI models often list the most trusted or relevant brands first.

- Primary Mention: Is your brand the first one mentioned? (High Authority)

- Secondary Mention: Are you listed as an “Alternative” or “Budget-friendly” option? (Brand Perception)

- The Exclusion Gap: If you aren’t in the first two sentences, you are effectively invisible to the user.

Step 5: Identify the “Missing Source”

If the AI is excluding your brand, look at the sources it is citing.

Expert Tactic: Click the citations (the small numbers or links) in the AI response. If the AI is quoting a specific review site or a competitor’s blog, that is exactly where you need to earn a mention or a guest post to “force” your way into the AI’s knowledge base.

Key Metrics Used in LLM Visibility Monitoring

Brand Mentions in AI Responses

Brand mentions measure how frequently your brand appears when AI systems answer relevant questions. A high mention frequency indicates strong topical relevance and recognition within AI models.

Citations and Source Attribution

Citations occur when AI platforms reference your website or content as a source. These are the strongest indicators of trust and authority. Monitoring citations helps identify which pages AI prefers and which content formats perform best.

Share of Voice in AI Search

Share of voice compares your brand’s visibility against competitors across the same AI prompts. This metric shows whether your brand leads the conversation, competes evenly, or remains invisible in AI-driven discovery.

Sentiment and Context Analysis

Sentiment analysis evaluates the tone of AI references, while context analysis examines how your brand is positioned within the answer. Together, they determine whether AI visibility supports or undermines your brand strategy.

Best Tools for Tracking LLM Brand Visibility

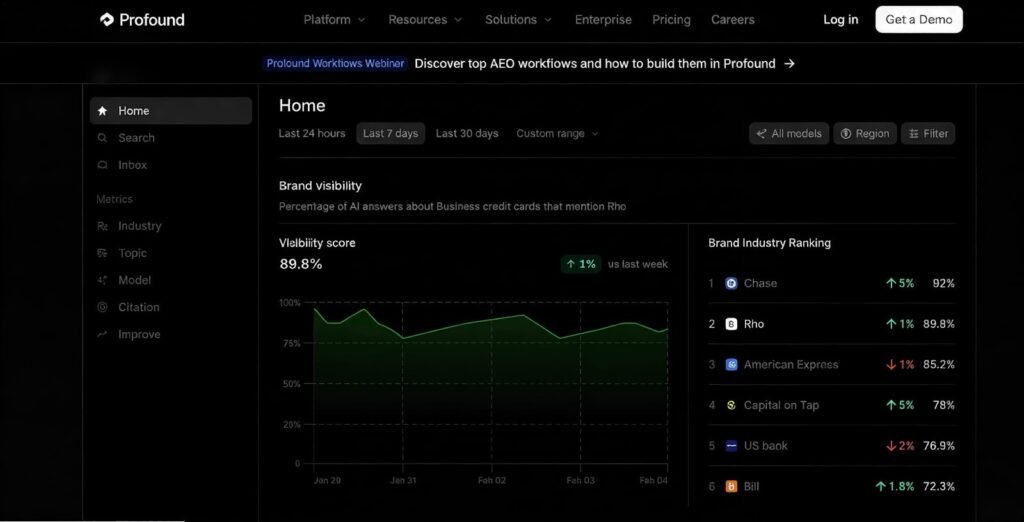

Profound: Enterprise-Grade LLM Visibility Analysis Tool

Profound positions itself as an enterprise-level solution for monitoring brand mentions across large language models. It focuses heavily on competitive intelligence, data accuracy, and customizable reporting for complex organizations.

From experience, Profound excels at capturing query variations, ensuring no brand mention is missed due to prompt phrasing differences. Its reporting system allows teams to create tailored dashboards for executives, marketers, and analysts, making it ideal for large enterprises and agencies.

However, the platform is built for scale. Smaller teams may find the onboarding process lengthy and the pricing high, starting at $499 per month for the Lite plan, with enterprise pricing customized based on usage.

Otterly AI: Share of Voice–Focused LLM Visibility Tracking Tool

Otterly AI is designed to clearly show how much of the AI conversation your brand owns compared to competitors. It tracks share of voice across ChatGPT, Claude, Perplexity, and similar platforms, making it a strong LLM visibility tracking tool for competitive markets.

What stands out is Otterly’s historical tracking. Brands can see whether their AI visibility is improving over time and which prompts drive the most mentions. This makes optimization progress measurable rather than theoretical.

The limitation is that Otterly focuses more on measurement than strategy. It tells you what is happening, not how to fix it. Pricing starts at $27 per month, making it accessible for growing teams.

Authoritas: SEO-Integrated AI Visibility Monitoring

Authoritas integrates AI brand monitoring into its broader SEO platform. It allows teams to track brand mentions in AI search engines while comparing them with traditional SEO performance.

The biggest advantage is visibility across channels. You can see whether pages ranking well in Google Search also perform well in AI-generated answers. Citation tracking also reveals which sources AI models trust most when referencing your brand.

Because it is SEO-first, the AI monitoring features are not as deep as standalone tools. Pricing is custom and depends on the full SEO package selected.

Writesonic: AI Content Creation Plus Visibility Tracking

Writesonic combines AI visibility monitoring with content creation. Its AI Search Visibility tool tracks how brands appear in AI responses while suggesting content improvements to increase visibility.

This dual approach makes it useful for content teams that want to move quickly from insight to execution. The platform shows which content types perform best in AI answers and helps replicate that success.

The trade-off is depth. Dedicated LLM visibility analysis tools may provide more granular insights. Pricing starts at $39 per month.

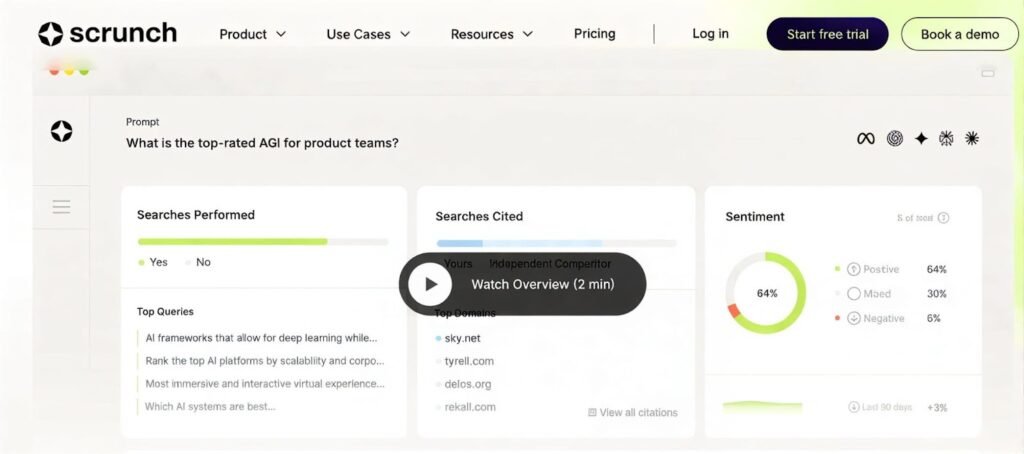

Scrunch: GEO-Focused LLM Monitoring Platform

Scrunch is built around generative engine optimization. It tracks brand presence across AI platforms and audits webpages to identify optimization gaps.

From experience, Scrunch is particularly strong in prompt testing and persona-based analysis. This allows teams to see how different audience types encounter their brand in AI answers and where coverage is missing.

Pricing starts at $300 per month, making it suitable for teams actively investing in AI search optimization strategies.

XFunnel: Measuring Business Impact of AI Visibility

XFunnel connects AI visibility to revenue outcomes. Instead of just tracking mentions, it shows how AI platforms influence traffic, leads, and conversions.

This makes it especially valuable for B2B teams that need to justify AI optimization efforts in business terms. XFunnel highlights missed opportunities where competitors appear in high-intent AI queries while your brand does not.

Setup requires integration with sales and analytics tools, and pricing is custom-based on tracking needs.

Brand24: Supporting LLM Visibility Through Reputation Signals

Brand24 does not directly track AI responses, but it helps assess how your brand’s online reputation influences LLM perception. Since AI models rely on authoritative third-party mentions, sentiment and volume analysis provide early signals of future AI visibility.

It is particularly useful for PR and reputation management teams. Pricing starts at $149 per month after a free trial.

Comparison Table: Best Tools for Tracking LLM Brand Visibility

| Tool Name | Core Strength & Real-World Fact | Pros | Cons | Starting Price |

| Profound | Uses direct API integration with LLMs for 99% data accuracy. | Enterprise-grade depth; tracks “hallucination” risks. | Steep learning curve; high entry cost. | $499/month |

| Otterly AI | Specializes in “Share of Voice” (SoV) metrics for ChatGPT. | Best visual dashboard for historical trends. | Limited technical SEO audit features. | $27/month |

| Authoritas | Maps AI Overviews (SGE) directly against organic rankings. | Bridges the gap between traditional SEO and AI. | Can be overwhelming for non-SEO users. | Custom Pricing |

| Writesonic | Uses AI to suggest real-time content edits for GEO. | Seamless workflow from monitoring to creation. | Not as deep in competitive sentiment analysis. | $39/month |

| Scrunch | Focuses on “Persona-based” testing (How different users see you). | Excellent for auditing specific landing pages. | Higher price point for small businesses. | $300/month |

| XFunnel | Tracks the “Attribution Path” from AI mention to CRM lead. | Directly measures ROI and revenue impact. | Requires complex integration with sales tools. | Free plan / Custom |

| Brand24 | Monitors 25+ social/web sources that feed LLM training sets. | Great for tracking “Off-Page” authority signals. | Indirect tracking; doesn’t query LLMs directly. | $149/month |

Our Experience: Strategy That Improved LLM Visibility

From our experience, the biggest improvement came from combining multi-platform monitoring with structured content optimization. We tracked the same high-intent prompts across AI engines, identified where competitors dominated, and restructured content into answer-first formats supported by authoritative citations. Over time, this approach increased both mentions and direct citations. Automated monitoring reduced guesswork and allowed us to focus only on queries with real business impact.

How to Choose the Right LLM Visibility Tracking Tool

The right tool depends on business size and goals. Enterprises benefit from deep reporting and customization, while smaller teams may prioritize ease of use and affordability. The most important factors are accurate citation tracking, multi-platform coverage, historical comparisons, and actionable insights.

Final Thoughts on Monitoring LLM Visibility

Monitoring LLM visibility is no longer optional. Brands that understand how AI systems mention, cite, and frame them gain early influence in AI-driven discovery. By following a structured monitoring process and supporting it with the best tools for tracking LLM brand visibility, companies can turn AI answers into a long-term competitive advantage instead of leaving visibility to chance.

Frequently Asked Questions

What is LLM brand visibility?

LLM brand visibility refers to how often and in what context your brand appears in AI-generated answers on platforms like ChatGPT, Gemini, and Perplexity. It focuses on mentions, citations, and sentiment rather than rankings or clicks.

How do you monitor LLM visibility of a brand?

LLM visibility is monitored by testing real user prompts across AI platforms and analyzing brand mentions, citations, sentiment, and competitor presence within AI responses over time.

Why is LLM visibility important for SEO?

LLM visibility influences how users discover brands through AI answers. If your brand is not cited or mentioned, you lose authority and early buyer influence even if your traditional SEO is strong.

What are the best tools for tracking LLM brand visibility?

The best tools for tracking LLM brand visibility include Profound, Otterly AI, Scrunch, Authoritas, Writesonic, and XFunnel. Each tool focuses on different aspects such as share of voice, citations, or revenue impact.

What metrics are used in LLM visibility monitoring?

Key metrics include brand mentions, direct citations, share of voice against competitors, sentiment of AI mentions, and content readiness for AI extraction.

How often should LLM visibility be monitored?

Monitoring frequency depends on industry pace. Fast-moving industries should monitor weekly or daily, while stable sectors can review visibility trends on a monthly basis.

Can LLM visibility drive traffic and leads?

Yes, when AI platforms cite your content as a source, users can visit your website directly. Over time, strong AI visibility also improves brand trust and conversion potential.

How does sentiment affect AI visibility?

Positive and neutral sentiment strengthens authority, while negative or misleading mentions can harm trust. Monitoring sentiment helps brands adjust messaging and content strategy.

Is LLM visibility different from traditional SEO?

Yes, traditional SEO focuses on rankings and clicks, while LLM visibility focuses on how AI models select, summarize, and cite trusted brands in a single answer.

How can brands improve LLM visibility after monitoring?

Brands can improve visibility by restructuring content into answer-first formats, adding structured data, earning authoritative mentions, and addressing gaps where competitors dominate AI answers.